Can We Secure AI With Formal Methods? November-December 2025

Give thanks for benchmarks with “Veri-” in the title.

We did the rebrand! The previous thumbnail was a baseball metaphor, but it was very clearly someone getting out, not safe. I was testing all of you and each of you FAILED.

Here’s the prompt for the new thumbnail:

i’m keeping AI in a box, doing AI CConfinement (like in yampolskiy 2012), using formal verification / formal methods. That’s my whole thing. I need art for my newsletter on these topics. I like the percival story from troyes/wagner and i like tolkien, but if you take from those elements put it IN SPACE like scifi. Also use german expressionist painting styles. Ok now give me some DALLE art.

So long “Progress in GSAI”. I still like the position paper that the old newsletter title was based on, but

It’s very scifi and I think there’s more alpha in obvious/relatively easy/uncontroversial (but not done by default) work.

The word “guarantee” doesn’t evoke “swiss cheese”.

It’s time to double down on relationships between AI security and formal methods, directly, more explicitly than you can do within the framing of GSAI.

Also notice: gsai.substack.com is now a redirect to newsletter.for-all.dev. I’ll be hosting a bunch of my technical reports and comms/strategy outputs at that domain going forward (the subdomain newsletter will just point to substack). But don’t worry, the scope of the newsletter remains largely the same (excepting the pivot to be more directly and explicitly about AI security) / won’t devolve into being any more nakedly self promotional than it has been so far.

I received a grant from a Funder of Presently Undisclosed Provenance to do comms and strategy for AI security via formal methods, which means among other things that this newsletter will get a little more TLC.

Busy month, I expect things to be slow over christmas, after this edition I’ll see you all in 2026.

In the spirit of chivalry, I styletransferred most abstracts in this edition of the newsletter to Troyes/Cervantes style. I did not check to see if Gemini got anything wrong, but every headline is a link to arxiv or openreview which you’ll click if you’re interested.

Miri’s treaty team posts a paper!

Excited about this. They use the word “verification” in a different context then we do, they mean it in the sense of verifying the absence of enriched uranium (GPUs) or verifying that the terms of a treaty are being abided by.

Many experts argue that premature development of artificial superintelligence (ASI) poses catastrophic risks, including the risk of human extinction from misaligned ASI, geopolitical instability, and misuse by malicious actors. This report proposes an international agreement to prevent the premature development of ASI until AI development can proceed without these risks. The agreement halts dangerous AI capabilities advancement while preserving access to current, safe AI applications.

The proposed framework centers on a coalition led by the United States and China that would restrict the scale of AI training and dangerous AI research. Due to the lack of trust between parties, verification is a key part of the agreement. Limits on the scale of AI training are operationalized by FLOP thresholds and verified through the tracking of AI chips and verification of chip use. Dangerous AI research--that which advances toward artificial superintelligence or endangers the agreement’s verifiability--is stopped via legal prohibitions and multifaceted verification.

We believe the proposal would be technically sufficient to forestall the development of ASI if implemented today, but advancements in AI capabilities or development methods could hurt its efficacy. Additionally, there does not yet exist the political will to put such an agreement in place. Despite these challenges, we hope this agreement can provide direction for AI governance research and policy.

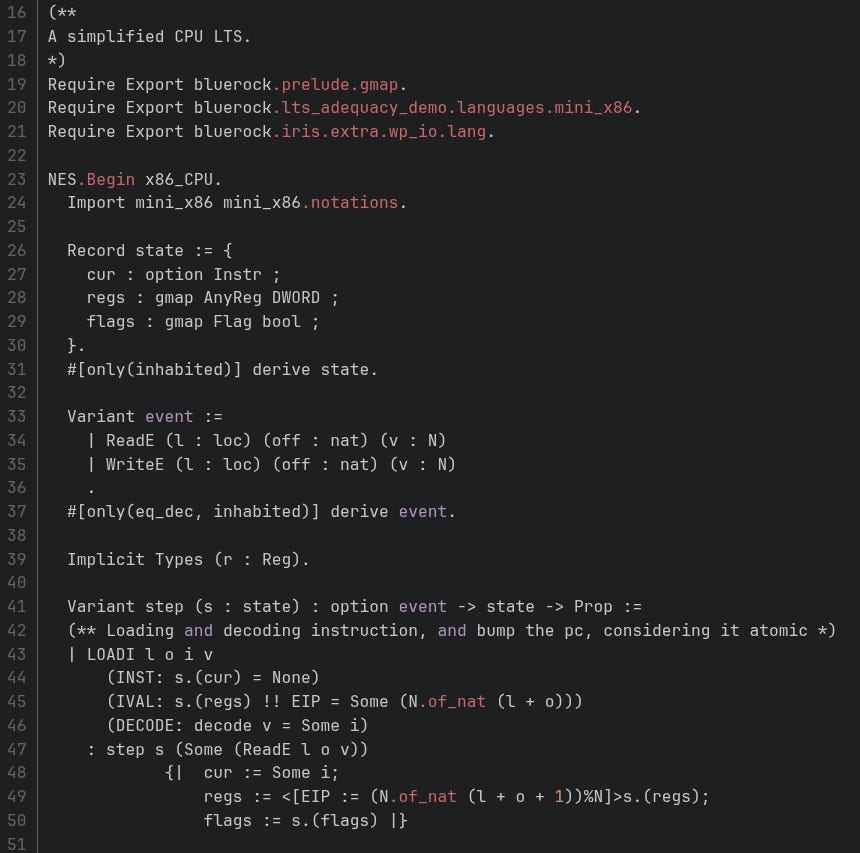

BlueRock GPLs the specs and proofs of NOVA

Three. Great. Blog posts. The third one of interest for insight into maintenance and repair of a spec and proof codebase.

NOVA is the legendary hypervisor that was specified and proven correct at BlueRock (FKA Bedrock). I say “legendary” because as a wee lad, stalking Bedrock’s github activity, hearing rumors about C++ verification, it was one of the few Ws of industrial verification at scale that I had heard about.

Look at that B-E-A-YOOT.

A hypervisor is a part of the virtual machine stack. NOVA is a hardened one for critical systems, technically a microhypervisor.

We should teach AIs to write this stuff, cuz that looks painful to type.

We don’t talk enough about separation logic here on the newsletter. Anyways,

People are playing with Aristotle, Harmonic is hiring

$120M series C.

Hardware is an interesting product area! Looks like their business model has advanced past the “mumbling to investors about curryhoward” stage. 2025, the year of mumbling to investors about curryhoward, has come to a roar of a close. I have also mumbled about curryhoward to my dearest yall, which might mean I get bayes points for a math company starting to spin up a program synthesis product. I can’t tell how obvious that sort of claim was, or is, but I know one thing: I love getting points.

If you have Aristotle access, please test FVAPPS and report back. Be sure to append the unit tests, that’s like the hardest part of the benchmark.

If I had a nickel for every benchmark prefixed “Veri-” it’d only be four nickels but it’s still weird that it happened four times

Some of these I had no good reason not to cover earlier. Abstracts styletransferred by Gemini.

Vericoding

We do hereby present and test the largest ledger of trials yet assembled for the craft known as Vericoding—the generation of a code whose certainty is sworn upon by the very stars—from the formal scrolls of specification. This, mind you, is in stark contrast to the common, wicked Vibe Coding, which spews forth a quick but bug-ridden script, born of a mere whisper of natural tongue.

Our grand ledger contains twelve thousand five hundred and four such scrolls of specification, with three thousand and twenty-nine written in the ancient runes of Dafny, two thousand three hundred and thirty-four in the sturdy tongue of Verus/Rust, and seven thousand one hundred and forty-one in the subtle logic of Lean. Of these, a full six thousand one hundred and seventy-four are entirely new, untarnished challenges.

We find that the success rate of this noble Vericoding, when performed by the Sorcerers of Language (our off-the-shelf LLMs), stands at a meager 27% in Lean, rises to 44% in Verus/Rust, and achieves a triumphant 82% in Dafny. Alas, the addition of a common, flowery natural-tongue description does not notably sharpen their success. Furthermore, the light of these Sorcerers has illuminated the pure path of Dafny verification, raising its former success rate from a humble 68% to a glorious 96% over the past twelve moons.

Veribench

The Formal Verification of Software doth stand as a promise most bright—a potential transformation wrought by the Generative Artifice of the Mind (AI). For a Provably Correct Code would utterly banish entire legions of hidden vulnerabilities, staunch the fatal breaches of critical systems, and, perhaps, forever change the practice of software engineering through trustworthy methods of implementation.

To spur this sacred domain, we unveil VeriBench, a trial meticulously crafted for judging the strength of the Sorcerers’ Models in the end-to-end verification of the Code. This task demands the generation of complete Lean 4 incantations—the working functions, the unit tests, the Theorems of Correctness, and the Formal Proofs themselves—all drawn from humble Python reference spells or their accompanying common-tongue docstrings.

Our scrutiny of this one hundred and thirteen-task suite (comprising the tasks of HumanEval, simple drills, classical algorithms, and security snares) reveals a woeful truth: the current Frontier Sorcerers compile but a small fraction of the programs. Claude 3.7 Sonnet achieves compilation on a mere 12.5%, while the mighty LLaMA-70B cannot compel a single program to compile in the Lean 4 HumanEval subset, even after fifty attempts guided by feedback! Yet, observe the noble Self-Optimizing Trace Agent architecture, whose compilation rates approach a magnificent 60%! VeriBench thus lays the unyielding stone for developing systems capable of synthesizing provably correct, bug-free code, thus advancing the journey toward a more secure and dependable digital kingdom.

VerifyThisBench

While the Grand Language Models (LLMs) have shown marvelous cunning in the quick generation of code, many existing trials are now easily conquered, and offer little guarantee of trustworthiness for the generated programs. To gain greater insight into the Sorcerers’ reasoning on matters of Formal Correctness, we present VerifyThisBench, a new, agonizing trial which assesses the end-to-end verification of programs from mere natural-tongue descriptions.

The models must complete a trifecta of chivalric deeds: (i) Extract the Formal Specifications, (ii) Implement the Code in a language that craves verification, and (iii) Construct the Machine-Checkable Proofs.

Our evaluation reveals that even the most vaunted of the modern models, such as o3-mini, achieve a pass rate of less than 4%, with many of their utterances failing to even compile! To divine the true source of this difficulty, we further propose VerifyThisBenchXS, a milder variant where partial implementations or proofs are benevolently supplied. Across nine distinct models and seven tools of verification, we observe a steady gain when refinement is driven by the whispers of feedback, yet the overall pass rates remain pitifully low, underscoring the vast chasms that yet divide the Sorcerers from true formal reasoning. We release this trial and its unified environment to spur on the verification powers of all future models.

VeriEquivBench

Formal Verification stands as the ultimate frontier for ensuring the veracity of the code spawned by the Grand Language Models (LLMs). Methods that co-generate the code and the formal specifications in austere formal languages, such as Dafny, can, in theory, swear upon the truth of their alignment with the user’s intent. Alas, the entire progress is stifled by the difficulty of judging the quality of the specifications themselves.

Current trials rely upon the perilous task of matching the generated work against a ground-truth specification—a manual process requiring deep expertise, which has limited existing datasets to a mere few hundred simple problems, and moreover suffers from a profound lack of reliability.

To remedy this, we introduce VeriEquivBench, a new trial featuring two thousand three hundred and eighty-nine complex algorithmic puzzles designed to expose the frailty of current models in both the generation of code and the deep formal reasoning. Our evaluative framework replaces the perilous ground-truth matching with a formally grounded metric: the Equivalence Score, and rigorously verifies the quality of the generated specifications and code. Our findings declare that the generation of formally verifiable code remains a profound challenge for the state-of-the-art Sorcerers. This underscores both the sheer difficulty of the task and the desperate need for trials like VeriEquivBench to hasten the march toward scalable and trustworthy coding agents.

From Galois’ blog

Specifications don’t exist

Should’ve been in last newsletter but slipped through the cracks.

We need words for the different pessimisms about FMxAI. I often talk about the world-spec gap or the world-spec problem (that formal methods don’t rule out sidechannel attacks). This post is about a different pessimism, the elicitation problem or the elicitation and validation problem. Someone should absolutely be funding an org to focus on elicitation and validation, it’s a turbo important part of the theory of change. Is anyone working on this?

Lean and claude code

Mike also has a technical post about vibecoding in Lean.

Pair it with these off the shelf “skills” (a claude code feature that’s “just prompts with extra steps”).

Rigorous Digital Engineering

What if proof engineering but too cheap to meter?

Oops i missed Logical Intelligence

Should’ve covered these folks a while ago. Yes, it appears their clientele is crypto/defi, but I have a generally positive attitude about life and I don’t want to set my “days since snark incident” counter back to zero, so we will ignore that and focus on the little we can ascertain about their tech and their claims.

There are two parts to this, there’s the part of why/how exactly they believe what they believe about their Lean product, and the part of how their Noa agent (which is not paywalled, you can just install it on github) fits into my strategic worldview.

Primitive screwheads: text-to-text. My boomstick: structural synthesis

Logical Intelligence is not bullish on autoregressive text-to-text as a program synthesis paradigm. Like Leni Aniva, they think tree search (starting with MCTS) will beat LLMs in the fullness of time. The interesting part, with a very paywalled model that I can’t test, is if they’re right why isn’t Harmonic (or Morph or a frontier company or anyone else) scooping them? It’s the same thing I say when I look at HOC: yes, text-to-text is an uncivilized approach to program synthesis, but we haven’t welded structural synthesis with the bitter lesson yet, and I don’t expect to see the gains until we do. If it could be any other way, then we’d be living in the GOFAI Extended Cinematic Universe instead of the Prompts Extended Cinematic Universe. I could write down some loose ideas of things you could try (to achieve the welding), but I will not because I’m unconvinced the d/acc case is actually the majority of the mass. I’m too concerned that Logical Intelligence, HOC, to some extent Leni are right about the superpower unleashed by structure-aware program synthesis and I don’t think we’re ready (as a QA/safety community, nor as a society).

Analyzing codebases for vulnerabilities

From their product page:

Ordering an external audit is both very expensive and very time-consuming. Our AI tool, Noa, delivers regular feedback on your code—minutes for smaller codebases and tens of minutes for larger ones. This lets you get near-real-time insight into the most critical potential security risks at a fraction of the cost. Noa integrates with GitHub: simply add the Noa bot to your repository, and after each pull request you can request a dashboard showing potential risks across the entire repository, along with their likelihood of exploitation and severity ratings.

I have a post coming out about this, but I think the sort of thing they’re trying to do here is an important part of the strategic outlook. Audits, cryptanalysis, cybersecurity consulting are an important area to automate if we’re going to know, with a finite proof synthesis budget, which components are the most critical to harden with proofs. To be clear, I have not used the product, I don’t have any codebases it’s a good fit for. But it’s a class of product I’m excited about, even (ugh) if it is (ew) for defi/crypto.

Announcements from the first round of Mathematics for Safe AI Opportunity Space at ARIA

Spot ole q doc somewhere on this page! Other highlights are the hardware verification team, the GFLowNet/SynthStats team, and the SFBench team.

Scalable synthesis of theorem proving challenges in formal-informal pairs

Apparently there was some twitter discourse about this paper but one of the discoursers was using a hidden profile. It’d be great to be more like a Zvi style newsletter full of twitter screenshots, that would just require me to log onto to twitter more, which like, no.

The Grand Confluence of Lean and the Scholarly Arts of Computation: A Fount of Trials for the Sorcerer’s Mind– The noble art of Formal Theorem Proving (FTP) hath risen as a cornerstone for judging the deep reasoning capabilities of the Grand Language Models (LLMs), enabling the automated verification of mathematical oaths upon a massive scale. Yet, the progress of this quest has been hindered by a scarcity of suitable archives, due to the high toll of manual curation and the lack of truly challenging dilemmas paired with verified correspondences between Formal Scroll and Informal Chronicle. We propose to tap into the wellspring of Theoretical Computer Science (TCS) as a boundless source of rigorous proof problems. Within this scholarly domain, the definitions of algorithms permit the automatic synthesis of an arbitrary number of complex Theorem-Proof pairs. We demonstrate this potent approach upon two realms of TCS: the Busy Beaver problems, which demand the proof of bounds upon a Turing Machine’s cessation of movement, and the Mixed Boolean Arithmetic problems, which entwine the logic of the mind with the rigor of number. Our framework automatically weaves these challenges, providing parallel specifications: the Formal Code (Lean4) and the Informal Narrative (Markdown), thus creating a scalable conduit for generating verified trials of proof. Scrutiny of the frontier models reveals substantial chasms in their automated theorem-proving prowess: while the champion DeepSeekProver-V2-671B achieves a noble 57.5% success rate on the Busy Beaver challenges, its strength wanes, managing only 12% on the Mixed Boolean Arithmetic puzzles. These findings illuminate the great difficulty of crafting long-form proofs, even for those problems whose computational verification is a mere trifle, thus showcasing the invaluable role of TCS realms in advancing the research of automated reasoning.

AI Resilience: cyberphysical systems

Friend of the newsletter Nora Ammann published AI Resilience a little bit ago. The section on cyberphysical systems is relevant to us: it relies on secure (formally verified) program synthesis becoming cheap and accessible. Resilience is a flavor of defensive acceleration that specifically targets the durable and structural resolution of vulnerabilities, vulnerabilities which get amplified by AI but which, if we’re diligent and hardworking, get ameliorated by AI as well.

Let’s formalize this step by step

One time a friend asked me “why not just put the proof synthesis in the reasoning trace and the thing you’re writing the proof about (say, a program) in the final output“. And I was like, “...huh”. And I got as far as adding a few credits to my runpod account before getting pulled into other things. Little did I know, at exactly that moment, this team was hard at work!

A Proposal for Safe Passage: The Formal Verification of the Grand Sorcerers’ Thoughts– The method of the Chain-of-Thought (CoT) prompting hath become the established ritual for coaxing forth the reasoning powers from the Grand Language Models (LLMs). Yet, to contain the hallucinations in these Chains—phantoms notoriously difficult to discern—the current remedial arts, such as the Process Reward Models (PRMs) or the Self-Consistency measures, operate as opaque boxes, offering no verifiable evidence for their judgments, thus perhaps limiting their true efficacy. To redress this failing, we draw inspiration from the ancient wisdom that “the gold standard for supporting a mathematical claim is to provide a proof.” We propose a retrospective, step-aware framework of Formal Verification which we title Safe. Rather than assigning arbitrary scores or marks, we strive to articulate the mathematical claims within the formal mathematical language of Lean 4 at the conclusion of each reasoning step, and further provide formal proofs to definitively identify these hallucinations. We test our framework Safe across various models and mathematical archives, demonstrating a significant enhancement in their performance, while simultaneously offering interpretable and verifiable evidence for their passage. Furthermore, we propose FormalStep as a new trial for the correctness of step-by-step theorem proving, containing 30,809 formal statements. To the best of our knowledge, our work represents the first valiant endeavor to utilize the formal mathematical language of Lean 4 for verifying the natural-tongue content generated by the LLMs, thereby aligning with the very reason these formal languages were created: to provide a robust and unshakeable foundation for the hallucination-prone proofs scribed by human hands.

Ulyssean website mission status: totally sick

There’s honestly no Ulyssean update in this issue, but I stumbled upon their website and loved the graphic design!

I'm digging into all of this (just found you... hi!), but I wanted to comment, before I forgot, that I love the Troyes style of the abstract transfers.

I actually just read _Erec et Enide_ recently (English primarily but also some parts in the Old French) and it's just too delightful of a coincidence to me not to comment on.